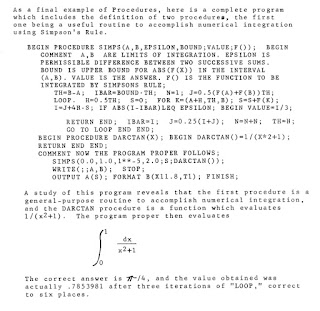

As noted on my previous post, I have managed to reproduce most of the missing subroutines for my old copy of the Burroughs Algebraic Compiler for the Burroughs 205. This has been quite an educational experience and I thought it might be worth taking some time to share it with the (hopefully, numerous) readers of this blog.

Background

I had sadly neglected to dump enough blocks of tape from the University of Portland's copy of the compiler back in 1967 when I left to go "back East" to graduate school. The listing followed me around for many years accompanied by a few Burroughs manuals and some CARDATRON coding forms.

It wasn't until I got my own 205 emulator running to the point of successful compiles in the mid 2000s that I discovered the Algol compiler wanted to read blocks of tape beyond the 231 that I had dumped forty years before.

Examining the tape listing, I discovered a little bit of good news. I had complete copies of the Fixed-to-Float and Float-to-Fixed routines and of the LOG function. This was enough for me to reverse-engineer Don Knuth's scheme for structuring the subroutines which are relocatable when attached to a program.

My initial plan for reconstruction was simple: try to code a Square Root subroutine and insert it into the correct location on the tape and see if it would work.

Now, square roots were something I knew at least a bit about. The "Introduction to Computing I" class at the University of Portland in 1963-7 required coding a Newton's Method square root routine in machine language that eventually would become the heart of a student's quadratic equation solver. Finish that project successfully and an engineering or science major had completed the last of the requirements for the course. Two credits from the Math Department, thank you.

But this was a slightly bigger problem. Our classroom problem was coded as a fixed point routine since the University's 1963-5 system didn't have a floating point cabinet. Now I needed a routine that works reliably on numbers from 10 to the minus 50th power up to 10 to the plus 50th. What would I use for my initial estimate and how would I check to see if I had run enough iterations to quit? And, oh yes, it needed to fit in 26 words of memory!

Newton's Method, sometimes referred to as "divide and average" has long been used to calculate square roots. There is a good quick explanation of it at the start of this article, just in case you have forgotten or thought that square roots have always been available on your computer by writing Y=SQRT(X). A deeper explanation of the mathematics behind the method can be found in this 2015 MIT course material. The author gives credit to the ancient Babylonians using it around 1000 B.C.

I decided to cheat, rather than reinvent the wheel. I picked up the BALGOL listing for the Burroughs 220 that been developed at Shell Oil, Huston, to see how they did it.

This contained more instructions than I was expecting - and it looked very little like the method that Issac Newton had used, starting off with multiplication and addition of three constants. Hmmmm!

But, I went ahead and translated it into assembler code for the 205, ending up with 57 words. (220 instructions are considerably more powerful than 205 instructions, as we will see later.)

I ran the program and looked at the results:

1 1.0000000

2 1.4142136

3 1.7320508

...

16 3.9999998

Wait a minute! What have I done wrong? Shouldn't the square root of 16 be 4? This is close, but... I spent a good deal of time looking for the flaw in my implementation, but to no avail.

I decided to go back into the literature and look for guidance. But, it turns out that not much current publication is devoted to calculating square roots in machine language. I turned to older literature.

The first square rooting that took place on a commercial machine that I could find was on the IBM 604 calculating punch. This machine deserves a great deal more respect than it gets. Released in 1949, it employed about 1200 vacuum tubes to perform addition, subtraction, multiplication and division at electronic speed. Programmable via a wired plug board with up to 60 steps, this was the common mathematician's "computer" of the era. As the heart of IBM's Card Programmed Calculator (CPC) the 604 was the scientific calculator that the early computer vendors sought to displace from universities and laboratories. IBM built (and very profitably rented) over 5,000 of them. There were apparently still over 400 of the units running in 1974, 25 years after their introduction.

Check the 604 Operator's Manual and there, on page 64 begins the square root wiring plan:

Well, look at that. Start at 3 for a 1 or 2 digit number, apply four iterations and you have a square root accurate to 8 digits. Has anyone studied this stuff and written up the math behind it?

Glad you asked. Enter Preston C. Hammer. Dr. Hammer was the go-to guy at Los Alamos for Monte Carlo calculations on their CPCs during the late 1940s and early 1950s; he later founded the Computer Science departments at Wisconsin and Penn State; he looks remarkably like Santa Claus and yet he has no Wikipedia entry, just this scattered biography.

At the December, 1949, IBM Computation Seminar in Endicott, NY, Hammer presented a paper showing the optimal starting value for any root over any interval when employing Newton's method. It turns out that the square root of 10 is the optimum starting value for the interval of 1 to 100 when calculating a square root. Further, Hammer notes the expected accuracy for 3, 4, 5, and 6 iterations. Six iterations gets us to around 18 digits of accuracy.

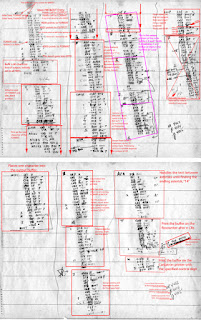

At about this point in my research, Paul Kimpel had gotten his 220 emulator on-line and managed to get the BALGOL compiler working. It turned out that BALGOL really did think the square root of 16 is 3.9999998 I had not blown the translation to the 205. Better yet, Paul found a copy of several math subroutines among the Don Knuth papers in the possession of the Computer History Museum, including a short, elegant square root implementation. These were developed for Clevite Corp on their Burroughs 205. Paul and I are guessing that these were what Knuth used in the ALGOL implementation or at least somewhat close.

With all that theory behind us, let's take a look at the two very different methods that the Burroughs 205 and Burroughs 220 Algol compilers use to take a square root. The examples here assume you are familiar with the format of floating point numbers on the 205/220.

BALGOL on the Burroughs 220

Step 1. The subroutine deals with the exponent, essentially cutting it in half and remembering whether it was odd or even.

Step 2. The subroutine calculates a square root of the mantissa, always taking the square root of a number between 1 and 10. It does this by developing a second order polynomial estimate of

0.62697923 + 0.41117107 x M - 0.016450338 x M2.

This gets us to within about 2% of the square root of the mantissa, M.

Step 3. The subroutine then applies two iterations of Newton's "divide and average" method to produce a sufficiently accurate final square root of the mantissa.

Step 4. Now we check to see if the original exponent was odd or even. Since the square root of 1.6 does not have the same sequence of digits as the square root of 16, a multiplier of √10 is applied if the exponent was odd. (this is a slightly simplified explanation if you are looking at the actual code.) This is where we lose that tiny bit of accuracy for 16, 25, 36 etc., versus 1, 4, and 9.

Burroughs Algebraic Compiler for the Burroughs 205

Don Knuth's approach is classical Newton's Method.

Step 1. Use a fixed point multiply to cut both the exponent and mantissa in half. E.G. 12,345,678 starts out as 5812345678 and becomes 2906172839

Step 2. Add 2550000000 to the new value. The above example becomes 5456172839

Step 3. Add 1 to the first digit of the mantissa if it is still even - this primarily fixes a problem arising if that digit has become zero. It makes no difference in this example. We now have 5456172839 = 5617.2839

Step 4. Do 5 iterations of Newton's method "divide and average."

Comparison of Methods

I ran a couple of different tests on the two implementations.

Test 1. I took the square root of integer values between 1 and 100 looking at the difference between the original value and the square of the square root of that value.

Over the 100 numbers I summed the absolute values of those differences and also looked at the maximum difference. On the BALGOL implementation, the sum of the differences was 0.00027920 with a maximum difference of 0.00000800 On Knuth's implementation, the sum of the differences was 0.00035170 with a maximum of 0.00001000

Knuth's result is tiny bit less accurate when measured this way but makes up for this, in my opinion, with much more aesthetically appealing square roots of 16, 25, 36, 49, 64 and 81.

Test 2. I ran a test of square roots of powers of 2 and powers of 10 to see how Knuth's implementation held up for large and small values. The results were quite satisfactory. 2 to the 26th power is the largest power of 2 to fit in 8 digits: 67,108,864 with a square root of 8,192 and this algorithm was right on the money. For powers of 10, this algorithm produced results beginning with 10 or 3162276 up through 10 to the 47th.

Not of minor significance: This Knuth Clevite subroutine fits in 27 words versus the 26 I was trying to match. I still can't figure out how he did that!

If you like to play with this stuff, the number of iterations used in the Clevite implementation can be found twelve words into the subroutine. It is the "address" on a Unit Adjust instruction (06). It is used to set the B-register to 4 which results in 5 iterations. Cut it to one less and the routine gives identical results for values between 1 and 100. Cut it to 3 and you are in serious error territory. Raise it to 5 or 6 and you should be well on your way to your own double precision implementation.

8/30/2022 NOTE: This post has been edited to correct some typos and formatting errors. It also contains incorrect information on the source of the Knuth Clevite code. This will be corrected in my next post.